The Evaluation Framework We Use When a Client Asks Us Which Agent Stack to Build On

Huzefa Motiwala April 21, 2026

When choosing an AI agent framework, it’s not about picking the most popular option – it’s about finding the one that meets your project’s specific needs. The wrong choice can lead to costly rewrites, high expenses, and performance issues in production. Here’s a quick breakdown of what matters most:

- Reliability: Can the framework handle errors and tool failures effectively?

- State Persistence: Does it support long-running tasks and recover from crashes?

- Multi-Agent Coordination: How well does it manage tasks between multiple agents?

- Observability: Are debugging tools detailed enough to fix issues quickly?

- LLM-Agnostic Architecture: Can you switch language models without major rewrites?

- Cost: Does the framework minimize token usage and infrastructure costs?

For simple workflows, plain tool-calling might be enough. For complex or regulated tasks, structured frameworks like LangGraph excel in reliability and error recovery. Always test frameworks with real-world failure scenarios before committing to avoid surprises in production.

I Tested Every AI Agent Framework So You Don’t Have To! (2026 Edition)

sbb-itb-51b9a02

6 Criteria That Matter in Production

When evaluating frameworks for production workloads, the differences between them become clear upon closer inspection. Here are six key factors that distinguish a prototype from a system ready for production.

Tool-Calling Reliability & Error Recovery

Failures are inevitable, but production systems must handle them gracefully. Common failure modes include ghost actions (tool calls that are generated but not executed), interrogation loops (repeatedly asking for the same information), and hallucinated tool calls (inventing APIs or parameters that don’t exist) [9][10].

"Only a metric that inspects the actual tool call trace would catch [false task completion]."

– Jeffrey Ip, Cofounder, Confident AI [9]

To test reliability, focus on two levels: component-level evaluation, which checks if the LLM extracts correct parameters for a tool, and end-to-end verification, which ensures the task is actually completed. Tools like OpenTelemetry, LangSmith, or W&B Weave can help trace tool calls and inspect the data exchanged between agents and tools [8][9][7].

Key metrics include:

- Argument Correctness: Do extracted parameters match the schema?

- Tool Selection Accuracy: Was the right tool chosen for the intent?

- Trajectory Efficiency: Were redundant steps or loops minimized? [9][10]

Introduce a validation node after each tool call to ensure results are correctly formatted before passing them back to the agent. Use safeguards like recursion_limit (LangGraph) or max_iter (CrewAI) to prevent infinite loops. For critical tools, such as those handling refunds or database deletions, implement an "interrupt" pattern requiring human approval before execution [12].

Next, consider whether the framework supports state persistence for long-running tasks.

State Persistence & Long-Running Workflows

Production agents often handle tasks that last minutes or even hours. A system crash during a multi-hour process shouldn’t mean starting over from scratch. Frameworks must support durable execution, allowing workflows to resume exactly where they left off [13][17].

Look for frameworks with robust checkpointing capabilities that save progress using persistent stores like PostgreSQL, Redis, or Cosmos DB. In a 2026 benchmark, LangGraph achieved a 96% error recovery rate due to its native checkpointing, compared to 72% for CrewAI and 68% for AutoGen [2].

For example, a B2B analytics startup in 2026 used LangGraph with PostgreSQL checkpointing to handle 2–4 hour research tasks. By incorporating three human approval gates (plan, findings, and final report) and leveraging LangGraph’s interrupt_before and checkpointing features, they avoided restarting tasks after crashes. This approach saved an estimated $180,000 in potential rework [17].

"If your agent workflow exceeds 5 minutes of wall-clock time, involves human approval steps, or needs to resume after failures, LangGraph’s persistence and checkpoint system will save you weeks of engineering work."

– iBuidl Research Team [1]

Avoid creating custom state-handling solutions. Instead, pin framework versions (e.g., crewai==1.10.1) to prevent breaking changes in state management [14][17].

Multi-Agent Coordination & Routing

When multiple agents work together, coordination becomes critical. You’ll need to choose between centralized orchestration (a supervisor controls all agents) and distributed choreography (agents coordinate independently). Centralized control is easier to audit, while distributed control allows for horizontal scaling but can complicate debugging [18].

The routing system must classify user intent accurately to avoid misdirected queries or "tool-call storms." For high-risk actions, frameworks should support interrupt primitives for human review before execution [7][19].

In July 2025, a Fortune 500 insurance company experienced a $63,000 cloud bill in just four hours due to an infinite loop causing 847,000 API calls. This was a direct result of missing state checkpoints [18].

"Unsophisticated agent deployments don’t fail gracefully – they fail expensively."

– Likhon, AI Engineer [18]

AutoGen’s conversational model averages 20+ LLM calls per task, making it 5–6× more expensive than structured orchestration frameworks like LangGraph, which averages 2–8 calls per task [2][9]. Use exponential backoff to prevent API rate-limit issues and regularly prune state to avoid "memory poisoning" and bloated context windows in long-running tasks [18].

Effective coordination pairs seamlessly with solid observability for fast issue resolution.

Observability & Debugging Experience

When something goes wrong in production, quick diagnosis is essential. The quality of trace data can mean the difference between a 10-minute fix and a 10-hour ordeal. Frameworks should integrate with observability tools like OpenTelemetry or offer native tracing features.

The best frameworks provide time-travel debugging, allowing you to inspect, rewind, and replay specific execution steps via saved checkpoints. This is invaluable for troubleshooting non-deterministic agent behavior [3][2]. Observability typically costs about $0.50 per 1,000 traces with tools like LangSmith [18].

Use "known bad conversations" (logs of past agent failures) to test your evaluation metrics and ensure they’re effective at catching real-world errors. As of 2025, 60% of organizations had deployed AI agents in production, yet 39% of AI projects still failed to meet expectations [11].

"Evaluation and CI/CD are critical for maintaining development velocity."

– Michael Dawson, Red Hat [8]

Observability tools reveal potential debugging challenges, shaping your framework evaluation process.

LLM-Agnostic Architecture vs. Vendor Lock-In

The LLM landscape evolves rapidly, so your framework should allow you to switch providers without extensive rewrites. While GPT-4 might dominate today, future advancements could shift the balance. Frameworks should avoid proprietary abstractions that tie you to a single vendor.

The Model Context Protocol (MCP) is emerging as a 2026 standard for connecting agents to external tools, reducing vendor lock-in and improving tool-call reliability [2][10]. Frameworks like LangGraph, which enforce typed state schemas, offer better portability than those relying on implicit state management [1][15].

Many organizations are transitioning from conversational prototypes to graph-based systems for finer control over execution flow [2][9]. Check whether agent definitions can move between frameworks or if they lock you into a specific ecosystem.

Total Cost of Ownership

When assessing frameworks, consider licensing, infrastructure, debugging, and potential migration costs. Conduct a 2-week evaluation to confirm cost efficiency. For instance, LangGraph Cloud’s Plus tier charges $0.001 per node plus a $155 monthly fee, while the Enterprise tier offers custom pricing with SLAs [18]. CrewAI’s Enterprise pricing remains undisclosed but includes managed infrastructure and role-based access controls [18].

AutoGen’s conversational model further inflates costs with its 20+ LLM calls per task compared to graph-based frameworks [2]. Hidden expenses, such as time spent debugging failures, extra infrastructure for state persistence, and migration costs if the framework doesn’t scale, should also be factored in [18].

The Scored Evaluation Template

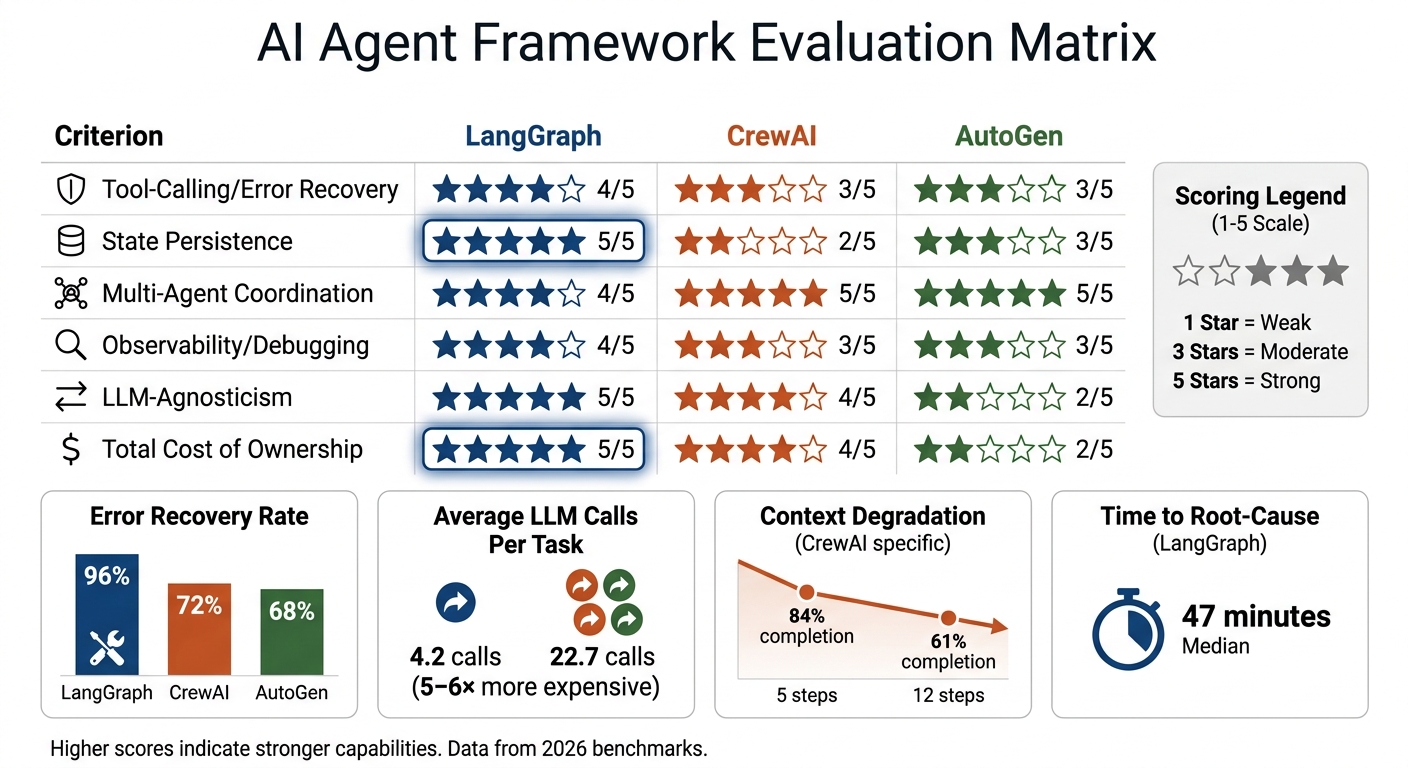

AI Agent Framework Evaluation Matrix: LangGraph vs CrewAI vs AutoGen

How to Score and Weight the Matrix

This matrix builds on six key production criteria to quantify the capabilities of each framework. It uses a 1–5 scale, where 1 indicates the weakest capability and 5 the strongest. Each criterion is scored independently, then multiplied by its assigned weight to calculate a final comparable score [17].

The weight distribution should align with the project’s stage. For instance, prototype projects might allocate 20–25% to "Ease of Setup", while production deployments often focus on "Production Readiness" and "Architecture Flexibility", assigning 25% to each [21]. To maintain balance, ensure the total weight equals 100% [22].

It’s essential to involve stakeholders, such as heads of product and senior managers, early in the process. This helps establish consensus on weight assignments and reduces team biases [22]. Documenting the reasoning behind these weight choices is equally important – it provides clarity when justifying trade-offs later on.

"The right framework choice is not about which framework is ‘best.’ It is about which framework’s abstraction model matches the specific shape of your problem."

– EngineersOfAI [17]

Worked Example: Scoring 3 Frameworks

Here’s an example comparing three leading frameworks using the six production criteria. Each framework is scored on a 1–5 scale. LangGraph stands out in state persistence and cost efficiency, while CrewAI and AutoGen excel in multi-agent coordination ergonomics [17][2][6].

| Criterion | LangGraph | CrewAI | AutoGen |

|---|---|---|---|

| Tool-Calling/Error Recovery | 4 | 3 | 3 |

| State Persistence | 5 | 2 | 3 |

| Multi-Agent Coordination | 4 | 5 | 5 |

| Observability/Debugging | 4 | 3 | 3 |

| LLM-Agnosticism | 5 | 4 | 2 |

| Total Cost of Ownership | 5 | 4 | 2 |

LangGraph’s 96% error recovery rate surpasses CrewAI (72%) and AutoGen (68%), making it the top choice for workflows that demand high reliability [2]. In contrast, AutoGen’s conversational model averages 22.7 LLM calls per task, compared to LangGraph’s 4.2 calls. This results in operational costs that are 5–6× higher for AutoGen [2]. For workflows exceeding five minutes or requiring human approval, LangGraph’s built-in checkpointing becomes indispensable [1].

To validate these scores, conduct a 2-week evaluation spike using a representative benchmark of 50 queries [17]. This hands-on testing will reveal whether the framework’s "happy path" aligns with your workload or if the abstraction model creates unnecessary obstacles during development [17].

Red Flags in Major Frameworks

After evaluating framework capabilities through a scored assessment, it’s equally important to spot warning signs that could lead to production challenges.

LangGraph (LangChain Ecosystem)

State management often becomes a stumbling block for teams. Modifying state schemas later in development can be a nightmare, and failing to return updated states may cause silent errors, leaving agents nonfunctional without any clear indication of failure [20]. Additionally, frequent state updates can lead to uncontrolled storage growth. Without proper pruning policies, this results in memory bloat and unmanageable storage demands [18].

LangGraph has the longest median time to identify root-cause failures, clocking in at 47 minutes [24]. Its default observability tools are limited – it doesn’t provide structured execution traces out of the box. To address this, you’ll need to build custom tracing solutions or integrate tools like LangSmith [24]. Another critical issue is the lack of a durable checkpoint store (e.g., PostgreSQL). Without one, any process crash results in the loss of all in-flight state [24].

CrewAI

Context degradation becomes a major issue during tasks that require multiple steps. Benchmark results show a significant drop in completion rates – from 84% at 5 steps to 61% at 12 steps [24]. Task outputs passed between agents can be truncated or summarized unexpectedly, leading to incomplete context transmission without any warnings [23]. Debugging is further complicated by the framework’s opaque internal agent protocol. Instead of inspecting structured outputs, developers often have to dive into the framework’s source code [24].

CrewAI was built to handle small agent groups (3–10 agents), and it shows. It lacks native queue management and runs agents sequentially by default, creating bottlenecks in production [1][20][21]. There’s also no built-in retry policy, meaning you’ll need to implement error handling manually for every task [24]. Additionally, the framework doesn’t track token consumption for individual agents, making cost attribution in multi-agent workflows a challenge without external tools [24].

While CrewAI struggles with context retention, other frameworks like AutoGen introduce their own set of challenges.

AutoGen

Token-burning loops are one of the most costly failure modes in AutoGen. Agents can get stuck in recursive "message loops", consuming massive amounts of tokens without making progress [24]. The lack of structured state exacerbates this issue, as agents lose track of their objectives during long sessions – a phenomenon known as conversation drift [18]. On average, the conversational model generates 20+ LLM calls per task, making it 5–6 times more expensive than frameworks using structured orchestration [2]. This directly impacts both production reliability and overall costs.

Execution traces in AutoGen are essentially conversation logs, which are difficult to process programmatically. Building a parser to make sense of these logs can take 3–5 days of engineering effort [24]. Additionally, ensuring deterministic behavior is a challenge, making it hard to reproduce specific failures for auditing purposes [24]. The absence of circuit breakers compounds the issue, allowing agents to retry failed operations endlessly, which can quickly drain budgets [24].

Beyond established frameworks, custom-built systems come with their own set of risks.

Custom Builds

While choosing an off-the-shelf framework takes minutes, developing a custom system with robust state persistence, retry mechanisms, and monitoring capabilities can take months [3]. Even then, custom solutions rarely offer the advanced debugging tools available in established ecosystems.

In July 2025, a Fortune 500 insurance company experienced a catastrophic failure with its custom multi-agent system. A hallucinated validation rule caused the system to enter an infinite loop, making 847,000 API calls over four hours. This resulted in a $63,000 cloud bill and a major production outage because the system lacked circuit breakers and state checkpoints [18].

"Naive agent deployments don’t fail gracefully – they fail expensively."

– Likhon, AI orchestration researcher [18]

Applying Business Context to Your Decision

Evaluation scores alone won’t determine the best framework for your needs. Even a framework that excels technically might fall short if it doesn’t align with your project stage, team dynamics, or risk tolerance. Let’s break down how different project phases impact the balance between rapid prototyping and robust execution.

Short-Term Speed vs. Long-Term Stability

The framework that helps you build an MVP quickly isn’t always the best choice for scaling. For example, CrewAI is known for its speed, enabling teams to create working prototypes in just 2–3 days [13]. A fintech client of Particula Tech demonstrated this in early 2026 by prototyping a dispute resolution agent within two days using CrewAI. However, when compliance demanded complex conditional branching and state rollbacks, they had to rebuild the system in LangGraph within a week [6].

On the other hand, LangGraph requires more upfront effort, often taking 1–2 weeks to learn. But it offers the precision and durability needed for complex workflows. For instance, in 2025–2026, Intuz used LangGraph to develop an agent for a healthcare client managing insurance prior authorizations. By leveraging context isolation at the graph node level, they boosted accuracy from 71% to 93% while meeting compliance requirements for state transitions [4].

Your project’s stage should guide your framework choice:

- For MVPs or prototypes, prioritize speed with tools like CrewAI or OpenAI Agents SDK.

- For complex scaling needs, frameworks like LangGraph or Mastra offer better state management and debugging capabilities.

- Regulated enterprises should focus on governance and compliance, favoring options like Rasa CALM or Microsoft Agent Framework.

| Project Stage | Recommended Stack | Primary Goal |

|---|---|---|

| MVP / Prototype | CrewAI, OpenAI Agents SDK | Speed to market, role definition |

| Complex Scaling | LangGraph, Mastra | State management, debugging, cycles |

| Regulated Enterprise | Rasa CALM, Microsoft Agent Framework | Governance, audit trails, self-hosting |

| Simple Automation | Plain Tool Calling (FastAPI/SDK) | Low latency, minimal complexity |

Before committing to a framework, map out your workflow. If your process doesn’t require loops, parallelism, or multi-hour persistence, plain tool-calling might be your best bet. It’s simpler to debug and can deliver up to 3× faster inference [14].

Decision Rules for Framework Selection

To choose the right framework, start by defining your non-negotiable requirements. If compliance standards like GDPR or HIPAA require self-hosting, eliminate cloud-only frameworks from consideration right away [26][27]. In heavily regulated industries like finance or healthcare, deployment models become a critical filter.

Next, integrate observability tools like LangSmith or Langfuse early in the process. These tools help track token usage, capture errors, and log traces, which is essential for debugging multi-agent systems. Without proper logging, resolving issues can take days [16][4].

Set clear guardrails to manage costs and prevent runaway processes. For example, use max_iterations and token budgets to limit resource consumption. Frameworks that rely on "agent debate" can quickly rack up 10× the expected token usage if termination conditions aren’t in place [16][4].

Finally, keep your business logic separate from framework-specific elements. Store prompts and tool definitions in framework-agnostic functions. This approach avoids code entanglement, making it easier to switch frameworks later if your initial choice doesn’t meet your needs [6][16]. Without a solid infrastructure, production reliability can suffer significantly.

Validation Checkpoints Before Commitment

After scoring your evaluation matrix, it’s time to confirm that your chosen framework is ready for production. This step ensures your assumptions hold up under real-world conditions. Skipping it can lead to costly surprises – like discovering your framework can’t handle production failures or needs an overhaul.

Set Success Criteria Early

Before writing any code, define measurable success criteria. For example:

- Production reliability: Set a target for task completion rates without human intervention. For most enterprise workflows, this is typically 85% or higher [24][4].

- State persistence: Decide if your workflows need to recover from tool timeouts, errors, or process restarts. For multi-hour workflows, checkpointing is essential [28][1].

- Observability: Establish a maximum "mean time to root-cause" for failures. Your framework should allow you to trace exact states at failing nodes and replay runs without guesswork [28][24].

- Cost predictability: Set a per-task budget to avoid runaway costs from unbounded loops [4].

- Human-in-the-loop integration: In regulated industries like healthcare or finance, your framework must support native "pause and resume" features for approvals [28][4].

"The best framework is the one that makes failures obvious, state recoverable, and behavior controllable – before you scale usage." – NeuralLaunchpad [28]

Once you’ve set these criteria, the next step is to test them in a controlled environment.

Run a 2-Week Evaluation Spike

To validate your framework, run a two-week trial with your top two candidates. Here’s how to structure it:

- Week 1: Build a minimal agent and create 20–50 test cases based on real-world failures or manual workflows you’re automating. Establish "ground truth" for tool calls and isolate environments to avoid shared state interference [29][25].

- Week 2: Stress-test the framework by intentionally triggering failures. Simulate missing data, inject tool errors, and pause workflows for human approvals to evaluate recovery capabilities [28].

Key areas to test include:

- Tool-calling reliability: Check if parameter values are contextually accurate and if the agent selects the right tools [29].

- State persistence: Force timeouts or crashes mid-workflow to see if the framework resumes correctly [2][28].

- Step efficiency: Measure how closely the agent’s path aligns with the shortest possible sequence of tool calls [29].

For example, in 2026, Notion’s AI team used Braintrust to scale their issue-resolution rate from 3 fixes per day to 30. By integrating evaluation into their CI/CD pipeline, they identified regressions before users encountered them [29]. Use metrics like pass@1 (success on the first try) for efficiency and pass^k (consistency across trials) for high-stakes workflows [25].

Establish Evaluation Gates

After testing, set clear checkpoints to decide if the framework is ready for production:

- Gate 1: Workflow shape analysis. If your workflow is linear with no loops or branching, a full framework may be overkill – plain tool-calling could be three times faster [14].

- Gate 2: Test against "known bad" cases. Use a dataset of past failures to confirm the framework can detect and handle these scenarios [8].

- Gate 3: Escalation accuracy. Measure how well the agent knows when to involve a human. False negatives (failing to escalate when needed) are major red flags [7].

"A 0% pass rate across many trials is most often a signal of a broken task, not an incapable agent." – Anthropic [25]

Ensure every action triggers the correct API call [9]. Your framework should also provide tools like time-travel debugging or execution traces. Without visibility into why an agent failed, fixing issues in production becomes nearly impossible [2][28]. Set benchmarks like "+5% improvement in correctness" or "no latency degradation" as thresholds for moving forward [19]. These gates help confirm the framework is reliable, cost-efficient, and ready for long-term use.

Conclusion: Making the Recommendation

After thoroughly examining production criteria and conducting rigorous testing, here’s a concise guide to help you make an informed framework choice.

Key Takeaways for Framework Selection

Frameworks influence about 20% of success, while the remaining 80% depends on robust infrastructure, including verification loops, retry logic, and observability [4][24]. When presenting recommendations to leadership, focus on workflow structure rather than framework popularity. For workflows that are linear and lack loops or branching, avoid frameworks altogether – plain tool calling reduces unnecessary latency [14]. However, for more complex workflows involving state persistence and error recovery, graph-based orchestration tools like LangGraph are indispensable.

"The problem isn’t choosing the wrong framework. It’s choosing a framework before understanding the workflow." – TheProdSDE, Senior Engineer [14]

Key criteria for evaluation include:

- Reliability of tool calling

- State persistence for long-running tasks

- Multi-agent coordination capabilities

- Observability for debugging during critical outages

- LLM-agnostic architecture to avoid vendor lock-in

- Total cost of ownership

Conversational frameworks can be 5–6x more expensive per task compared to structured orchestration, mainly due to unbounded loops [2]. Prevent costly surprises by setting hard token budgets and clear termination conditions. This is especially crucial when agent loops generate 10–20 LLM calls per task [4][2].

To make your decision defensible, use the scoring template and protocols outlined earlier. Conduct a 2-week evaluation spike using real-world failure scenarios, not just ideal paths. Simulate crashes mid-workflow to test state recovery and ensure the framework provides detailed traces for root-cause analysis. Set measurable thresholds: if your workflow lacks branching, a full framework may not be necessary. Persistent failures in "known bad" test cases signal poor production readiness.

While these steps can guide your decision, a poor framework choice can lead to significant challenges.

The Cost of Getting It Wrong

The risks of choosing the wrong framework are substantial. A poorly selected framework often leads to expensive rewrites within six months, as production complexity outpaces the initial choice. Under-engineering forces teams to create custom state machines and retry logic, resulting in technical debt that can quickly spiral out of control [1][14]. On the other hand, over-engineering with a complex framework for simple tasks can make systems 3x slower and harder to debug [14]. Using multiple frameworks in production creates operational headaches, such as shared rate-limit conflicts and inconsistent debugging tools. Additionally, vendor lock-in through model-specific SDKs can generate high migration costs, derailing future optimization plans [1][4].

"The framework matters less than people think. What will determine if an agent is reliable or not is the infrastructure around it." – CowrieDev, Developer [3]

The financial stakes are high. With inference costs projected to make up 55% of AI cloud spending – roughly $37.5 billion by early 2026 – choosing a conversational framework without strict termination conditions can lead to runaway expenses [4][5]. In fact, 49% of organizations cite high inference costs as the top barrier to scaling AI agents [4]. By adhering to a structured evaluation framework, you can mitigate these risks and ensure a balanced trade-off between speed, stability, and cost before entering production.

FAQs

How do I choose weights for the scoring matrix?

To determine weights for your scoring matrix, align them with the main goals of your project. If stability is a top priority, concentrate on essential factors like tool-calling reliability, state persistence, and observability. Distribute the weights proportionally, making sure they add up to 100% or 1.0. Refine these weights through testing and feedback, tailoring them to your team’s strengths and the elements that most influence performance and ease of maintenance.

What failure tests should we run in a 2-week spike?

Focus on these priorities during your 2-week spike: tool-calling reliability, testing edge cases, identifying infinite loops, simulating failure scenarios, verifying state persistence and recovery, and assessing multi-agent coordination. These tests are crucial for spotting critical failure points and ensuring the agent performs reliably in production. Adapt the tests to fit your specific use case to achieve the most effective outcomes.

When is plain tool-calling enough vs a full agent framework?

Plain tool-calling is great for straightforward tasks – like running a search query or pulling data – where there’s no need to manage state, coordinate multiple agents, or handle detailed debugging. But when workflows get more complex, involving things like persistent state, retries, debugging, or multi-agent coordination, you’ll need a full agent framework. This ensures the system can handle the complexity and remain reliable and manageable in a production setting.

Related Blog Posts

- What Engineering Teams Underestimate About AI Integration

- Architectural Trade-offs When Introducing AI into Existing Systems

- AI Coding Tools in 2026: What We Actually Use Across 20+ Client Projects (And What We Don’t)

- LangGraph vs CrewAI vs AutoGen: How We Evaluated All Three Before Recommending One for a Production Deployment

Leave a Reply